By Oliver Brayshaw

Computers have had a large impact on both musical artists and performers, from DAWs (Digital Audio Workstations) and applications to online platforms and hardware. I will present ideas from both the creative and the performance perspectives of music, while also discussing the impact one may have on the other. For clarity, when this article refers to ‘creation’, this comprises of composition, arrangement, capture, engineering and mixing. Likewise, a ‘musician’ refers to a creator of music, within any of the aforementioned descriptors of creation.

Performance

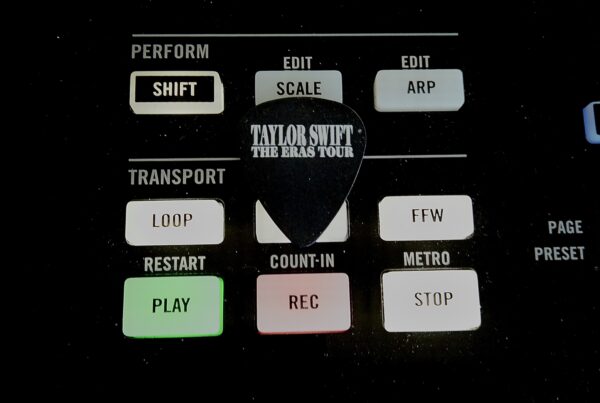

In the 21st century, the use of computers for live performance is not uncommon, for example, with software like Ableton Live, MainStage and Digital Performer, performers are able to program and playback backing tracks for a live setting. Often, the responsibility to oversee the computer is left to the drummer. Eric Downs, a prominent figure in the live and session recording scene of Los Angeles, presents these responsibilities in his YouTube video Anatomy of the Playback Rig (2017), stating that drummers now not only need to set up their kit for shows, but also must set up a playback rig. A prominent shift in the way drummers perform lends itself to the need for a click track in many situations, with backing tracks being played back at a fixed tempo, the drummer must adhere to this restriction so tracks are cohesive. Prior to this trend, drummers would’ve had more leniency when it came to tempos, with some performances perhaps shifting tempos mid-song, whether subtle or not.

The role of the drummer now often involves the use of triggers as an extension of their acoustic kits. George Daniel, of pop band The 1975, uses Roland BT and RT triggers to incorporate the samples used on their records into their live shows. In a video published on the Roland YouTube channel (Roland UK 2014), Daniel shows how he is able to layer a distorted snare sample with his acoustic snare, while only making contact with the latter. This brings studio recordings and live performances much closer, with the use of sounds that would otherwise be extremely difficult to recreate organically by a performer. This is a contrast to many artists in the previous century who often started with a live performance of a track and worked to capture it in a recording. Bands like The 1975 are beginning in the DAW and translating their recordings in to live performances. To summarise, a growing practice with modern music is to work out how to perform a studio recording as opposed to how to successfully capture a live performance.

Vocalists of the 21st century also have more tools at their disposal for performances, with some computer-based technologies serving as an extension of the musician’s capabilities. The TC Helicon VoiceLive is a multi-effects unit including reverbs, harmonies and modulations. This allows vocalists to create harmonies based on their actual performance, rather than have pre-recorded harmonies as a part of a backing track, as well as this, the ability to modify and control these effects at a live show allows the vocalist to present a more overt sense of musicianship. With the pitch shift effects, this unit gives musicians the ability to create sounds that they otherwise would not physically be able to produce, such as notes in an unnaturally low or high octave, this means the performer is much less restricted and can utilise less natural but more creative sounds.

The settings in which a musician can perform have been opened to the realms of the internet, with platforms like YouTube and Facebook serving as a stage that can be looked upon by an omnipresent audience. An artist’s performance for online distribution is often adapted for the format, for example, Audio Tree bridge the gaps in an artist’s set with questions and conversations with a host, a silence that would usually be filled by interaction with a crowd or musical interludes. The way musicians react to the lack of a physical and present audience is often evident in the way they perform their tracks, with more interaction between band members, perhaps as a form of reassurance or to evoke energy that would otherwise have been fuelled by the crowd and its venue.Computers themselves can be an electronic musician’s instrument, which is an idea that some people are still not used to and some may even reject completely. This could be explained by how recent a concept it is, with many attributing traditional instruments to musicians and associating computers with non-human algorithms that can’t express the emotion needed to perform a piece of music. Wessel and Wright (2002) describe and explain the difference between the process of playing a physical instrument and that of performing with an electronic controller. They use the term “one gesture to one acoustic event”, highlighting the intimacy and direct nature of the relationship between musician and instrument. With a controller, this process is not quite as direct, with a “sensor” being used to register a user’s gesture, metaphorically sending the information further from the musician, thus becoming a less personal experience, perhaps even separating the musician from the sound itself.

Creation

At the other end of the musicians’ spectrum, the options when coming to create music have been greatly expanded. Musicians are able to simplify their process of creating in a more traditional way, by recording good quality audio with just a mobile phone, allowing them to quickly and easily capture a song demo or idea. This organic process of writing with just a piano, guitar and vocal is captured on Taylor Swift’s 2014 album 1989, which includes early voice memos recorded on an iPhone. The practicality is evident when an artist as prolific as Swift chooses this method over a simple microphone set up in a studio. In addition to this, Swift was able to monetise these recordings by including them on the album, showing that the creation of a recording that people will listen to can be of minimal effort, with a resulting low quality that serves a purpose aesthetically and offers insight to the artistic process.

On the other hand, computers have also opened the option to start the creative process within a DAW, with sound banks, virtual instruments and the ability to instantly record ideas. These presets can be useful for inspiration when trialling different timbres and textures, these original sounds can be manipulated to create something unrecognisable, or even to use the original sound itself. The 1975’s hit song ‘Chocolate’ (2013), that peaked at number 19 in the UK singles charts, made use of an Apple percussion loop according to engineer Mike Crossey, who mentions it in Paul Tingen’s ‘Secrets of the Mix Engineers’ (2013) article for Sound on Sound.

Sounds on records that originated in the DAW provide a complete contrast to how progressive rock band Dream Theater, for example, composed in the previous century and continue to do so. In an article for Berklee College of Music (Berklee n.d), the institution to which they are alumni, Dream Theater explain how they would “start jamming” and “record everything”, before recording the final version in the studio. In this case, the sounds on the record originated from the artists and their respective instruments

The decision to take a specific approach to composition could be attributed to an external influence. In accordance with Csikszentmihalyi’s systems model of creativity, explained by Sternberg (n.d.), a musician will be influenced by the domain or culture with which they identify or are attempting to replicate, and upon doing so, reinforce these practices by distributing their work with the very same culture. For example, Dream Theater are associated with the progressive rock culture and this musical genre generally involves the same practices they employ of “jamming” with the whole band and recording it. Dream Theater have accessed the domain have been influenced by the domain they accessed and have placed the result back in the domain for the next band or consumer to obtain.

The 21st century has seen a development in emulation and simulation technologies, with the DAW possessing countless examples of these. For example, plug-ins made to emulate tape technologies like Waves’ J37 Tape and amp simulators like Native Instruments’ Guitar Rig. Logic Pro X’s factory compressors are based on hardware equivalents, like the Vintage VCA Compressor designed to emulate the SSL G Bus Compressor. Nowadays, given the extensive libraries of the DAW, musicians can even invest in a controller keyboard and have hundreds of types of synth models at their disposal. Mark Marrington discusses the impact of using these plug-ins, and by extension, virtual instruments (Hepworth Sawyer et al, 2016), highlighting the need for “a certain competency” with regard to how the equivalent hardware would work, a lack of this could result in the user “dabbling”. However, Marrington also points out that this is not necessarily a bad thing, as it could lead to more creative applications. Despite the positives, one could argue that using these processes to create music today, you are bypassing the need for knowledge, intention and skill that may once have been required to compose.

Computers have allowed almost anybody in this century to be an artist, no matter what their background, training, knowledge or experience. The DAW contains a variety of functions and tools that allow for instant and potentially unnoticeable editing, this includes quantising, pitch correction and MIDI input, allowing for rhythmic correction, pitch editing and even no performance at all, automation also makes it possible to emulate the dynamics and expression a musician would display. It is no longer essential for a musician to either be proficient in playing every instrument or to hire a session musician, saving both time and money in the long run. Similarly, Realtime Music Solutions’ software Sinfonia is a tool used to enhance an orchestral arrangement by adding further instruments and parts for them. Creators can now leave an important part of the compositional and performance process to software that will do it for them. This could potentially lower the number of musicians that would otherwise need to be hired to perform their respective instruments, but also make the composition of unfamiliar styles and instruments simpler. The replacement of the physical guitar player or drummer has occurred in a number of traditional genre contexts, as seen with Djent, which has its origins in metal music. In his article ‘From DJ to djent-step (2017)’, Mark Marrington suggests that this is as a result of “open-minded” practises brought on by the encouragement of “machine aesthetics” in the music industry. This is relevant in today’s industry with the more widely accepted use of computer composition and performance in almost every genre, not to mention the fact that this technology is cheaper and easier to access than it ever has been.

Accessibility plays a large role in the way musicians can create music, as every Apple computer comes with a DAW pre-loaded on to it in the form of GarageBand. A DAW that is not exactly the industry standard but is not completely redundant. If one were to require an upgrade, DAWs like Logic Pro, Cubase and Ableton all have more than capable versions available for under £200. With DAWs being so common among novices and professionals alike, musicians can create anywhere at any time, portable laptops able to run such applications make composition possible while on the move and, coupled with a pair of headphones, creators can do just that without anybody else knowing, never mind caring about the noise. These factors have led to bridging the gap between the wealthy and the poorer, the virtuoso and the novice. Musicians no longer require a professional producer to aid them in creating an industry standard record before they can gain any attention or reach as an artist, they are able to create low-quality, passable or even high standard demos on their own with their own equipment and distribute it online to an infinite audience just to be heard and grow a following.

Hardware, software and online capabilities have all had a significant impact on the way musicians perform and create in the 21st century, leading to the development of different genres, types of musician and interaction between artist and audience. With computer technology still a relatively young endeavour, it remains to be seen whether further development will change the music industry for the better or the worse, though with its impact up to this point in history, one must assume the technology and musician will continue to evolve somewhat synonymously

References

Berklee College of Music (No Date) Dream Theater: Where the Metal Meets the Road. [Internet]. Available from https://www.berklee.edu/bt/191/coverstory.html [Accessed 5 Jan. 2019].

Elise Trouw (2018) Burn [Stream]. [s.l.], Goober Records.

Elise Trouw (2018) Line of Sight [Stream]. [s.l.], Goober Records.

Eric Downs (2017) Anatomy of a Playback Rig [Internet Video]. Available from https://www.youtube.com/watch?v=6H5mS8G3Xe8 [Accessed 12 Dec. 2018].

Hepworth-Sawyer, R. ed, Hodgson, J. ed, Patterson, J.L. ed and Toulson, R. ed. (2016) Innovation in Music II. Shoreham-by-Sea, Future Technology Press.

Marrington, M (2017) From DJ to djent-step: Technology and the re-coding of metal music since the 1980s. Metal Music Studies, 3 (2). (s.l.), Intellect.

Roland UK (2014) The 1975 – Roland Hybrid Drums with George Daniel [Internet Video]. Available from https://www.youtube.com/watch?v=UmCHpfErlFQ&t=20s [Accessed 12 Dec. 2018].

Sternberg, R (no date). Propulsion Theory of Creative Contributions. [Internet]. Available from http://www.robertjsternberg.com/investment-theory-of-creativity/ [Accessed 29 Dec. 2018].

Taylor Swift (2014) 1989 [CD]. [s.l.], Universal Music Group International.

The 1975 (2013) Chocolate [CD]. [s.l.], Polydor Records.

Tingen, P. (2013) Secrets of the Mix Engineers: Mike Crossey. [Internet]. Available from https://www.soundonsound.com/people/secrets-mix-engineers-mike-crossey [Accessed 15 Dec. 2018].

Wessel, D. and Wright, M. (2002) Problems and Prospects for Intimate Musical Control of Computers. Computer Music Journal, 26 (3), pp. 11-12.